Managed and hosted

Qubinets for Apache Kafka®

Apache Kafka as a fully managed service, with zero vendor lock-in and a full set of capabilities to build your streaming pipeline.

No Credit Card required. No aditional Fees. All features included.

Introduction

What, how, where

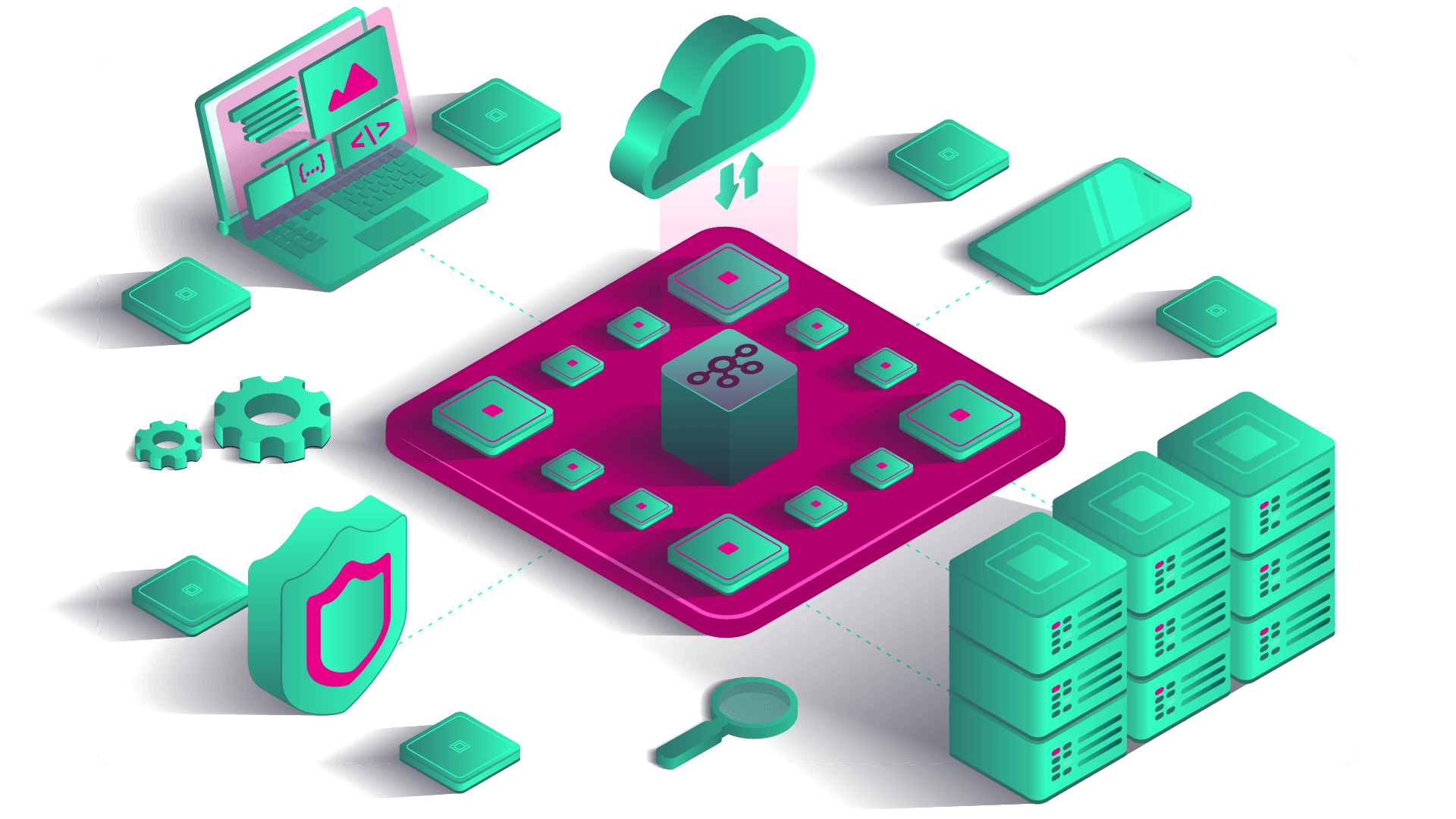

Apache Kafka® within Qubinets platform becomes the best tool in streaming and queuing for large-scale, always-on applications.

Qubinets platform enables everyone, even if you have never seen a code in your life, to build and deploy Apache Kafka® in matter of minutes. Just few drag and drops and your Apache Kafka® is up and running.

Packaging of our Qubinets Platform and its components, like Apache Kafka®, is happening through Kubernetes.

Qubinets platform is managing your data infrastructure so that you can focus on your business.

Benefits

Benefits of Qubinets for Apache Kafka® as-a-service

With Qubinets hosted and managed-for-you Apache Kafka, you can set up clusters, deploy new nodes, migrate clouds, and upgrade existing versions — in a single mouse click — and monitor them through a simple dashboard. So you can get back to creating and implementing applications, without worrying about Kafka’s complexity.

Automatic updates and upgrades. Zero stress.

Stressing about applying maintenance updates or version upgrades to your clusters? Do them both in a single click from your Qubinets dashboard. With no interruptions or downtime. Ever.

99.99% uptime. 100% human support.

Downtime is a disaster for critical applications. That’s why Qubinets makes sure you get 99.99% uptime. Plus, you get access to a 100% human support team — in case you need a helping hand.

Super-transparent pricing. No networking costs.

Qubinets for Apache Kafka comes with all-inclusive pricing. No hidden fees or charges, just one payment that covers networking to data storage, and everything in-between.

Scale up or scale down as you need.

Increase your storage, get more nodes, create new clusters or expand to new regions. Apache Kafka has never been this easy.

Use cases

How Apache Kafka® is used by Qubinets customers

Using a set of various supervised learning models, ranging from decision trees to LSTMs, it is possible to train models to predict next-in-series points or make sequence classifications. In case of insufficient training data, unsupervised approaches with iForests, k-means, dbscan, etc. can be used. Optionally, autoencoders which leverage self-supervision (using same input data as output data) learn normal data sequences. Note: as the nature of metric data is sync (collected at given time intervals – usually in minutes), this information can hardly be used for real-time observations.

Our experience and achievements range from visualizing data directly from Kafka streams while controlling Flink processors in real-time for the purpose of filtering, all the way to usage of standard systems as Grafana, Kibana and Superset in combination with data sources ranging from relational databases to OLAP databases.

Based on the Kafka message broker, setup data flows such that scalable components take raw data, perform filtering, splitting, aggregating, routing, batching and finally serve the data to the ML model(s), maintaining at the same time metadata store (PostgreSQL). The whole concept of plugging in ML models (which satisfy both north- and south-bound interface requirements) in the pipeline we call Marketplace.